Video-Driven AI Modelling: The Under-Recognized Inflection Poised to Reshape AI & Automation

Emerging beyond the current dominance of language-based generative AI, video-trained world models represent a foundational shift in artificial intelligence development. This signal, often sidelined amid the focus on text and language-centric algorithms, could fundamentally transform automation’s role across industries, regulatory regimes, and capital allocation within the next decade.

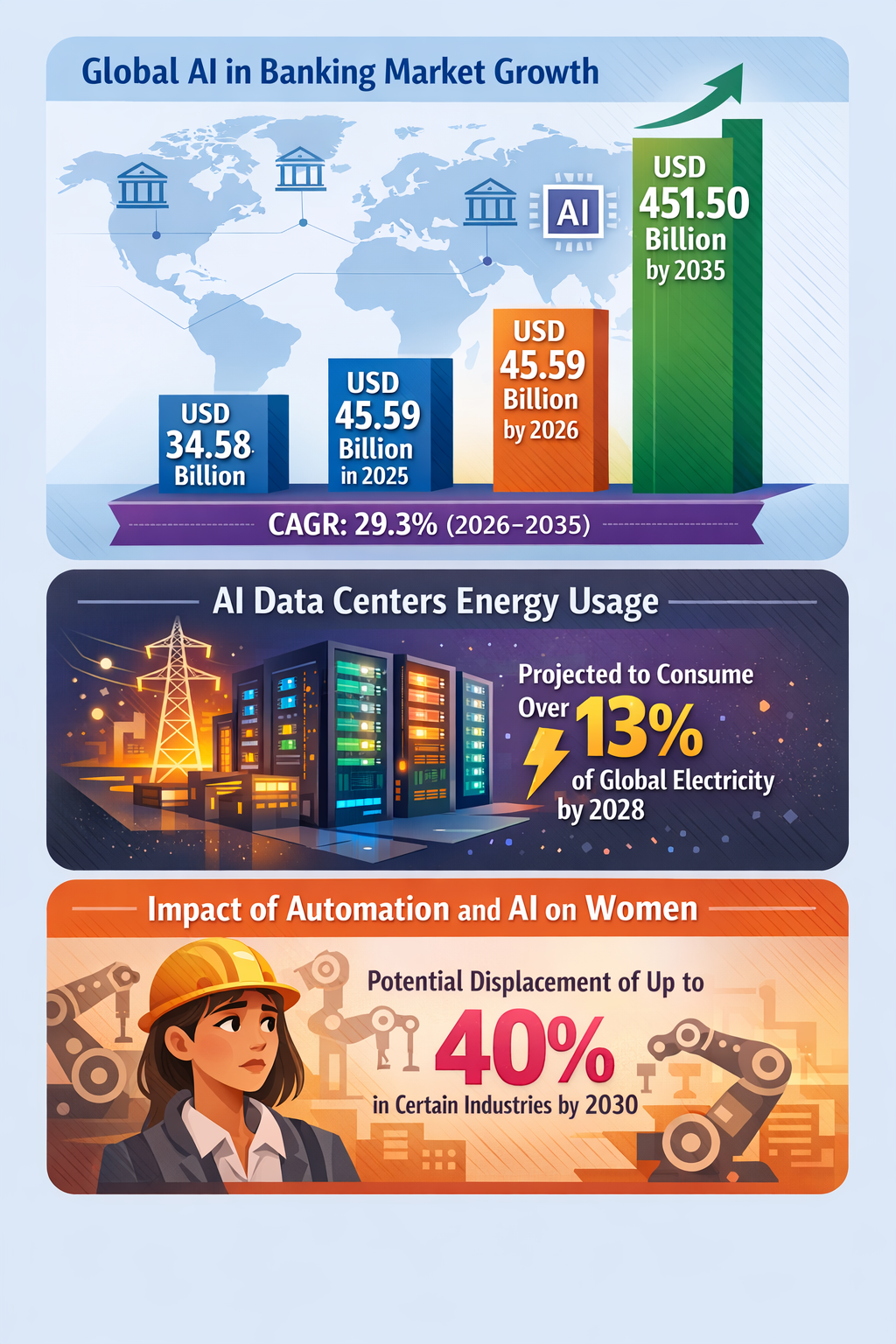

While energy demands, geopolitical AI applications, and workforce displacement have dominated discourse, the pivot toward video-derived contextual understanding signals an inflection point in AI’s structural capacity to model, predict, and automate complex real-world environments. This shift extends AI’s operational scope beyond textual interaction into spatial-temporal reasoning, enabling systemic leaps in domains such as infrastructure management, autonomous supply chains, and defense systems. The implications could reorder industrial value chains, spur new regulatory frameworks focused on AI embodied cognition, and redefine competitive positioning in automation technologies by 2030.

Signal Identification

This development constitutes an emerging trend with characteristics of an inflection indicator as it bears the potential to recalibrate the foundational architecture of AI systems. Unlike incremental improvements in language models, video-trained world models harness multi-modal sensory data to simulate richer environmental understanding and decision-making capacity. The timeline for significant scaling is within the 5–10 year horizon, supported by current research momentum (MarketingProfs 22/05/2026). The plausibility band is medium given nascent technical challenges but robust investment and cross-sector interest. Exposed sectors include advanced robotics, defense, supply chain logistics, data center management, and urban infrastructure.

What Is Changing

Recent AI development patterns reflect a gradual, yet systemic, shift from language-centric models to embodied, video-based world representations facilitating complex situational awareness. Traditional natural language processing models excel in pattern recognition within text but lack intrinsic understanding of spatial dynamics and temporal contexts required for autonomous decision-making across varied ecosystems. This gap is being filled by video-trained models that ingest and learn from real-world video data streams, enabling AI to “see,” predict trajectories, and coordinate multi-agent activities in dynamic environments.

For instance, hyperscale data centers globally are increasingly deploying advanced robotics for infrastructure management tasks, relying on visual input to navigate and optimize operations (Precedence Research 21/05/2026). Similarly, in the defense sector, Ukraine’s development of drone swarms with autonomous navigation underscores the increasing reliance on AI systems imbued with spatial-temporal reasoning empowered by video analytics (United24 Media 19/05/2026). These practical applications highlight a growing sector-wide inflection toward AI that operates beyond linguistic abstraction to embodied environmental interaction.

Concurrently, AI-infused supply chains are embedding predictive capabilities that depend not only on data flow analytics but also on real-time visual assessments of goods, equipment, and environmental conditions, enabling resilience through proactive intervention (PMC 15/05/2026). This signals a qualitative leap in automation’s role—from reactive task execution to anticipatory system orchestration.

The underappreciated structural theme is the pivot toward AI systems operating as contextual world models trained on video inputs, enabling richer situational awareness that fundamentally expands automation’s scope. Unlike incremental improvements in AI speed or efficiency, this signal portends a systemic capacity shift with broad cross-sector ramifications.

Disruption Pathway

The trajectory from emerging video-trained AI to structural change follows a stagewise intensification catalyzed by technological, capital, and policy factors. Initial accelerants include advances in computer vision, edge computing capabilities, and exponential growth in video data generation, fostering rapid iterative improvements in model accuracy. Capital inflows into robotics integrated with video AI, such as data center infrastructure and autonomous defense systems, validate market confidence and scale deployment.

Strains emerge as incumbent industrial models and regulatory frameworks, built around language/text-based AI or simplistic sensor automation, struggle to manage the complexity, accountability, and safety implications of AI systems that interpret and act on rich environmental data. For example, robotic infrastructure management powered by video AI may disrupt labor dynamics as operators are replaced but also challenge liability and compliance regimes currently ill-equipped for AI’s embodied cognition.

Structural adaptations may include the establishment of new governance paradigms prioritizing dynamic environmental AI validation, updated regulatory compliance models incorporating video-data provenance and situational context audits, and capital reallocation toward firms mastering video-based AI integration. Feedback loops could reinforce reconfiguration as successful early adopters drive efficiency gains, prompting competitors and regulators to follow suit. However, unintended consequences such as surveillance escalation, data privacy conflicts, or opaque autonomous decision-making may provoke societal backlash or regulatory clampdown, tempering growth dynamically.

Dominant models could shift from centralized, language-focused AI services to distributed, real-time video-enabled AI ecosystems embedded in physical infrastructure and autonomous fleets, generating new competitive fronts and industrial realignments.

Why This Matters

For senior decision-makers, this trend signals redefined capital allocation priorities toward AI investments emphasizing multi-modal sensory integration rather than pure language model scaling. Industrial strategies anchored on language-centric AI risk obsolescence as video-based AI transforms operational paradigms in infrastructure, defense, and logistics.

Regulatory frameworks will likely require adaptation to address the novel challenges of video-trained AI, including new standards for environmental model validation, safety certification, and data governance. Failing to anticipate this could induce systemic risk within sectors deploying embodied AI extensively.

Supply chains could be reshaped as AI-powered vision systems enable hyper-responsive, autonomous orchestration, diminishing reliance on human intermediaries and traditional oversight models. Consequently, firms unable to embed or compete with video-trained AI may face erosion of market position or stranded asset risk.

Governance consequences include the necessity to balance innovation facilitation with privacy, surveillance, and safety considerations unique to video-enabled AI, demanding novel interdisciplinary policy approaches.

Implications

This video AI inflection may reshape AI ecosystems by enabling automation to transcend text-bound context into real-world adaptability, unlocking previously unreachable efficiencies and innovation frontiers. It could compel capital markets to reprioritize investments toward video-capable AI frameworks and related hardware.

However, this development is not merely an upgrade of existing AI modalities but could constitute a structural shift altering the industrial architecture of AI-enabled automation. It might raise contentious regulatory and societal issues around data collection volume, algorithmic interpretability, and human oversight.

Alternative interpretations might argue that technical complexities or privacy/regulatory pushback could delay or mitigate widespread adoption, relegating video-trained AI to niche uses. Still, the confluence of data volume growth, technological advances, and sectoral deployments argues for its credible scalability within a decade.

Early Indicators to Monitor

- Patent filings and R&D disclosures focused on video-trained AI architectures and embodied cognition models.

- Venture capital and corporate capital allocation shifts toward AI-video robotics integration platforms.

- Procurement and deployment announcements of advanced robotics using video AI in hyperscale data centers and defense.

- Regulatory drafts or standards initiatives addressing liability and safety for video-based autonomous systems.

- Academic and industry benchmarking performance reports demonstrating superiority of video-trained world models over text-only systems.

Disconfirming Signals

- Major technical failures or slowdowns in training scalable, reliable video-based AI models.

- Regulatory restrictions imposing severe constraints on video data usage or real-time autonomous decision-making.

- Market pullback from key early adopters within data center automation or defense sectors.

- Emergence of radically different AI paradigms (e.g., neuromorphic computing) that sidestep video-model reliance.

Strategic Questions

- How can capital deployment strategies balance investment in proven language models with emerging video-trained AI paradigms?

- What regulatory frameworks are needed to ensure safe, transparent, and ethical deployment of video-based autonomous systems?

Keywords

Video-trained AI; Embodied Cognition; Automation Inflection; Capital Allocation; Regulatory Frameworks; Industrial Strategy; Data Center Robotics; Autonomous Defense

Bibliography

- The next frontier of artificial intelligence will emerge from video-trained world models rather than language models alone. MarketingProfs. Published 22/05/2026.

- Increasing hyperscale data centers globally is projected to accelerate the deployment of advanced robotics systems for infrastructure management and automation tasks. Precedence Research. Published 21/05/2026.

- Dozens of Ukrainian companies are implementing AI, creating drone swarms, and building autonomous systems in which robots and drones, rather than people, will fight thanks to artificial intelligence. United24 Media. Published 19/05/2026.

- Concurrently, the wave of intelligent technologies, epitomised by generative artificial intelligence (AIGC), is infusing new, more predictive and creative potential into enhancing supply chain resilience. PMC. Published 15/05/2026.

- Automation and AI could displace up to 40% of women in certain industries by 2030. Fawcett Society. Published 10/05/2026.